Platform

Use Cases

Many Possibilities. One Platform.

AI and Automation

The Always-on Incrementality Platform

Solutions

Teams

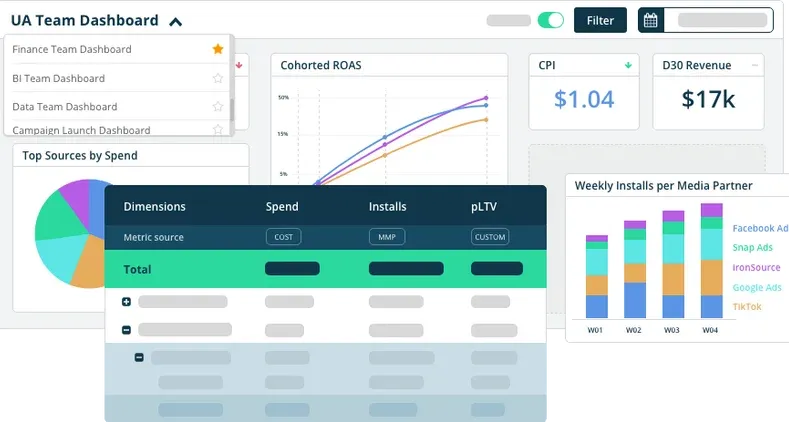

Built for your whole team.

Industries

Trusted by all verticals.

Mediums

Measure any type of ad spend

Use Cases

Many Possibilities. One Platform.

AI and Automation

The Always-on Incrementality Platform

Teams

Built for your whole team.

Industries

Trusted by all verticals.

Mediums

Measure any type of ad spend

Unlock the Value of your Ad Spend

Incrementality is the holy grail of marketing. Marketers would love it if they could know that every new customer was correctly attributed to the channel that caused them to convert.

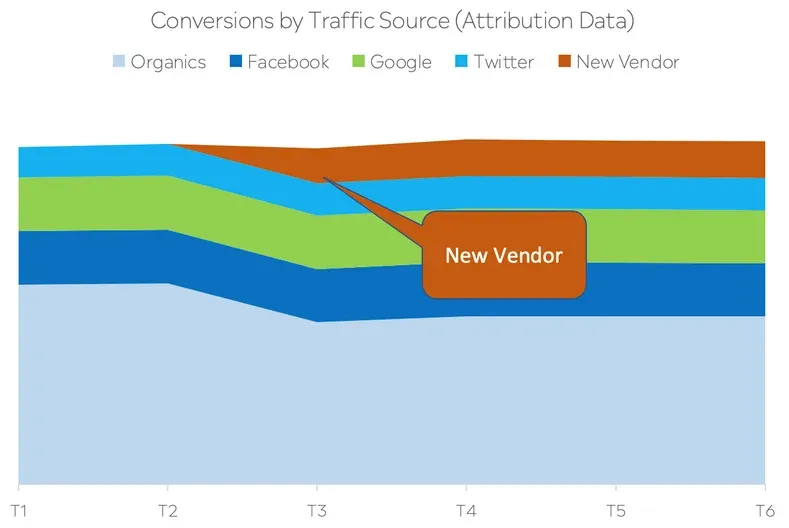

Marketers relied on attribution data to analyze which campaigns and media vendors are responsible for performance.

Does having an attribution measurement solution solve the problem of incrementality measurement? No.

Attribution has been the greatest gift digital advertising had to offer marketers. Allowing marketers to assign dedicated links to campaigns and ad vendors, adding parameters for granular information such as location, creative, demographics and so on - gave marketers a data driven picture to analyze marketing results.

Attribution works in real time, providing marketers with a “proxy” to understand correlation to their customer and sales activities.

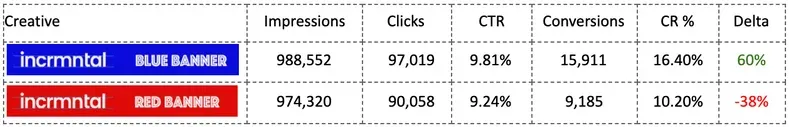

Attribution is awesome to tell apart between creative performance.

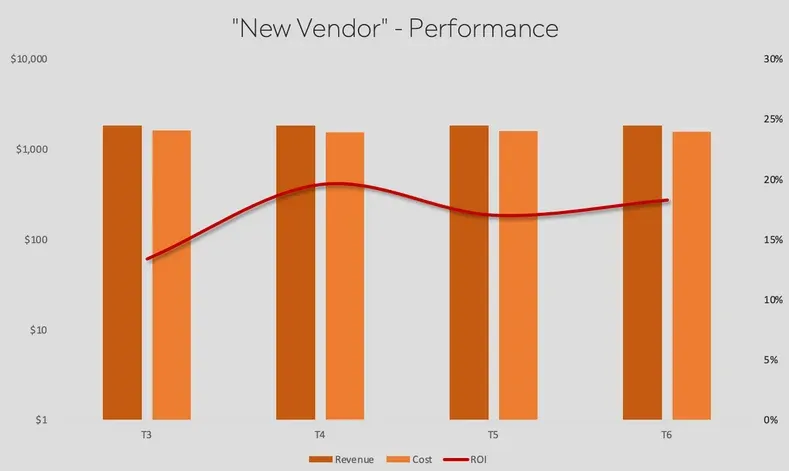

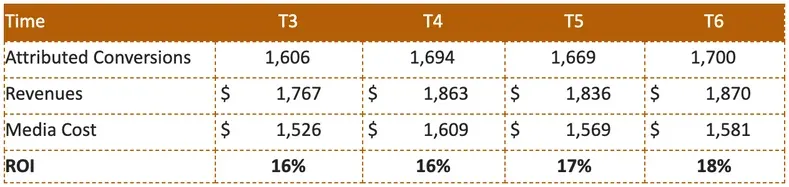

The graph and table below show the reported ad spend and revenues from new customers attributed to “new vendor”.

Based on last touch attribution data - this new vendor generates a nice return on investment.

When zooming out to see overall results, the user acquisition graph looks as follows:

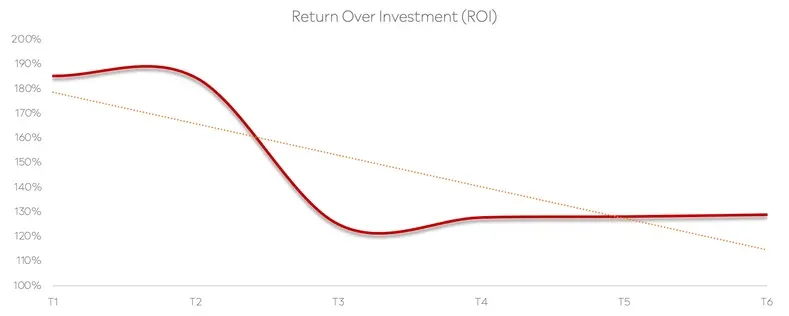

This would mean that while the new vendor’s ROAS looks positive - the total ROI decreased.

Return Over Ad Spend ignores total ROI, leading marketers to waste and non incremental results. Uber and AirBNB found that over 80%(!) of their performance advertising spend was redundant. And this is just the tip of the iceberg.

Incremental return over investment takes a more holistic approach to measurement, always monitoring the results across the board, while taking into account the results as reported by last-touch attribution and media cost data.

This approach looks at the conversion changes caused by paid marketing, to come up with an understanding of the real incremental sales lift and incremental ROI.

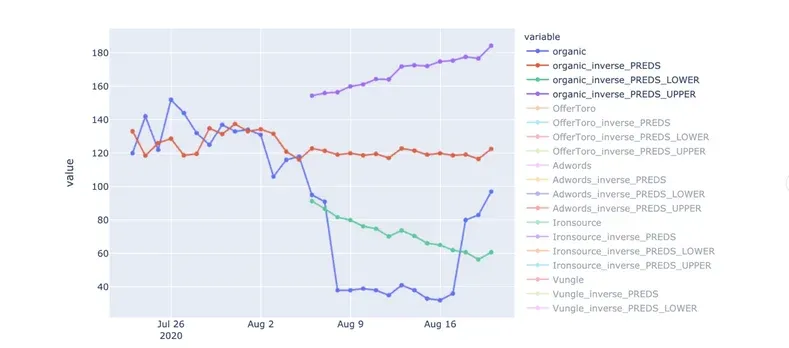

Measuring incrementality requires a continuous layer of prediction analytics overlaid above every comparable combination. Creating a synthetic cohort allows our platform to monitor the difference in difference and come up with digestible insights to marketers.

E.g. “The ROAS for ‘New Vendor’ is cannibalizing Organic traffic. Stopping the activity with ‘New Vendor’ will lead to positive incrementality”

Incrementality measurement until recently focused on segmenting audiences into a control group and showing those audiences with PSA or Ghost Ads, comparing the results of a campaign shown to the control group vs. the result of the general campaign.

This approach usually produced biased or inconclusive results, as there was no ability to know if the control group was “clean” and unaffected by other campaigns running.

Various other attempts to test incrementality were done by blacking out advertising all together for a period of time - but this approach had such high opportunity costs and only provided conclusive results for the time the test was performed - that most advertisers abandoned the idea of performing such tests.

Our challenge at INCRMNTAL was: How would we know if a user was going to perform an action, even if they were not advertised to?

The answer: we don’t

Our initial idea was: we will build “better attribution”. We wanted to build an attribution solution based on 1st party data, and apply machine learning to understanding the multiple touch points a user has with ads.

But this was a moot point - multi-touch is practically impossible in the mobile app ecosystem, as user data is becoming obsolete.

We also figured that attempting to help developers by offering a new measurement SDK is not helping the developers. No one wants to integrate another SDK.

Our research, had us understand that developers are not in need of “better attribution” - attribution as it is - is fine. But attribution does not provide measurement.

Making budget decisions based on attribution data lead to some major loses. Just ask Uber or AirBNB who reported that over 80% of their ad spend was redundant.

Once we established a few ground rules, we had our direction

Once we established our ground rules, the answer was found in data science and statistics with Causal Inference and Difference in Difference.

Causal inference is the process of determining the independent, actual effect of a particular phenomenon that is a component of a larger system. The main difference between causal inference and inference of association is that causal inference analyzes the response of an effect variable when a cause of the effect variable is changed.The science of why things occur is called etiology. Causal inference is said to provide the evidence of causality theorized by causal reasoning.

Difference in differences is a statistical technique used in econometrics and quantitative research in the social sciences that attempts to mimic an experimental research design using observational study data, by studying the differential effect of a treatment on a 'treatment group' versus a 'control group' in a natural experiment. It calculates the effect of a treatment (i.e., an explanatory variable or an independent variable) on an outcome (i.e., a response variable or dependent variable) by comparing the average change over time in the outcome variable for the treatment group, compared to the average change over time for the control group. Although it is intended to mitigate the effects of extraneous factors and selection bias, depending on how the treatment group is chosen, this method may still be subject to certain biases

Applying causal inference into Advertising was the real challenge. Advertising, and specifically, multi-platform, high throughput, high scale, global, competitive and highly volatile, environment with no constant makes approaching causal inference an extremely challenging task.

You may say that we had an apple fall on our heads when we found our “how”. A simple, yet obvious, constant in every market research call we had with Advertisers across the globe and across various verticals.

From here on, it was an “easy” task, spending the next year running data experiments, developing anomaly detection, developing statistical models and algorithms, and developing a an AI brain that can interpret the algorithmic outputs to simple outputs: “New Vendor has no incrementality to your activity. We recommend that you stop the campaigns launched with New Vendor”

We spoke with many growth teams from various industries and needs to come up with the 6 use cases our platform provides answers about:

Each of these use cases works in reverse as well. Which means that if you as a marketer lowered a bid and ROI increased, or if you paused a campaign and organics went up - the INCRMNTAL platform will provide you with these strategic outputs so that you are always unlocking the value in your marketing spend.

INCRMNTAL is an incrementality measurement platform providing Advertisers with incrementality and cannibalization insights over their campaigns, ad networks and any marketing activity. The platform calculates incremental ROI and cost for incremental conversion.

Unlock the full value of your marketing budget.

If you want to learn more, visit INCRMNTAL or book a demo today!

Maor is the CEO & Co-Founder at INCRMNTAL. With over 20 years of experience in the adtech and marketing technology space, Maor is well known as a thought leader in the areas of marketing measurement. Previously acting as Managing Director International at inneractive (acquired by Fyber), and as CEO at Applift (acquired by MGI/Verve Group)