Platform

Use Cases

Many Possibilities. One Platform.

AI and Automation

The Always-on Incrementality Platform

Solutions

Teams

Built for your whole team.

Industries

Trusted by all verticals.

Mediums

Measure any type of ad spend

Use Cases

Many Possibilities. One Platform.

AI and Automation

The Always-on Incrementality Platform

Teams

Built for your whole team.

Industries

Trusted by all verticals.

Mediums

Measure any type of ad spend

“Can incrmntal measure performance for campaigns on iOS?” is the top question mobile app companies ask us during introduction demo calls.

Apple’s ATT drove many UA managers into chaos. The Ad platforms that relied on SKAdNetwork postbacks (like Facebook, Google) started showing only a fraction of the conversions they used to report, and platforms that utilized fingerprinting all of a sudden appeared to be generating more conversions. Optimization became a real challenge as the largest ad platforms under reporting caused UA managers to be practically blind.

Some teams focused on optimizing conversion value, so that they can get more SKAdNetwork postbacks. Some asked their MMP to continue fingerprinting (against Apple’s policy…), but the challenge remains. If a company had developed an infrastructure for their UA activities, relying on user-level attribution – Apple’s ATT effectively broke those systems.

INCRMNTAL must be the only company that humbly celebrated Apple’s announcement. While we’re not an “iOS attribution platform” – we were lucky to be in a position where user-level data is meaningless for our methodology. But rather than gloat for other people’s pains – lets focus on solutions.

The article below and multiple examples are all showing real examples of measurement for campaigns running on iOS. For some of the examples, we also know how many conversions were attributed by click-user level attribution, hence, allowing you to see the difference – though from our perspective – user-level attribution is not “worse or better” than incrementality – user-level is a great proxy on it’s own, and we do acknowledge the benefits it has. You can read about the differences between last-touch and incrementality in this article.

Rather than trying to “better attribute the last click”, the root to our measurement methodology is causal data science, utilizing timeseries analysis and predictions.

Customers share only aggregated data with us (we don’t want to get any user-level data!), allowing our platform to log changes in marketing activities.

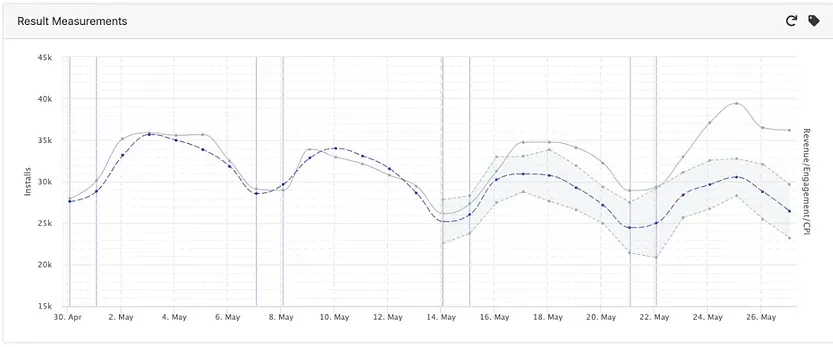

Whenever you click “Measure” on any marketing activity, our models create millions of timeseries data creating a prediction answering the question: “What would have happened if we didn’t make this specific change that we wanted to measure?”

It looks like this:

The above example is a very simply one, as this measures the change from 0 to 1. The campaign budget increased on the 11th of April, and given that there were not too many other changes – it was very simple to measure the difference.

Mobile Attribution is typically based on impressions and clicks – matching between the last impression (or click) a user saw or did before converting.

With ATT, user-level tracking is mostly obsolete, as users are prompt to “allow tracking” across every single app that wants to access their device identifier (IDFA).

The opt in must be dual sided for tracking – the user needs to allow tracking on both the app where they saw the ads, as well as the advertisers’ app.Learn more about SKAdNetwork here

In this iOS measurement example, attribution tracked only 4,760 conversions as a result of this increase in spend, while the causality model (above) showed clearly that the action (increasing ad spend) lead to thousands of incremental conversions.

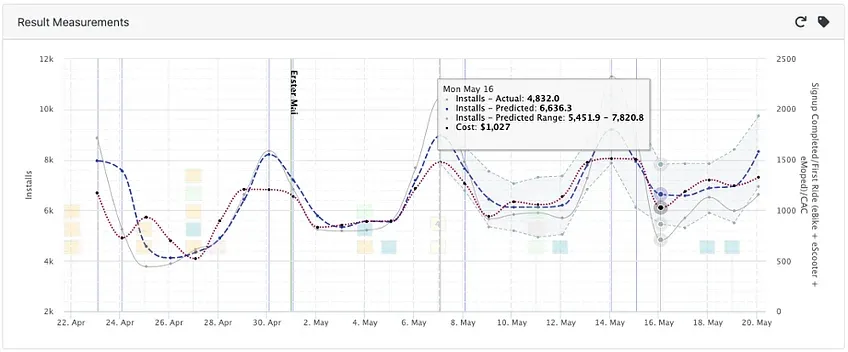

This example is also showing drastic differences between SKAd and INCRMNTAL

In the example below, the customer opened Facebook as a channel during May, and the causality model was able to attribute an incremental impact of 33,260 installs, while SKAd reported only 705.

Had the UA Manager made a decision based on SKAd, they would have needed to consider a CPI of $47.6 , while the actual CPI from this spend was $1.00 – much more aligned with reality, and the cost per install the customer was experiencing across all paid channels, and across Android campaigns.

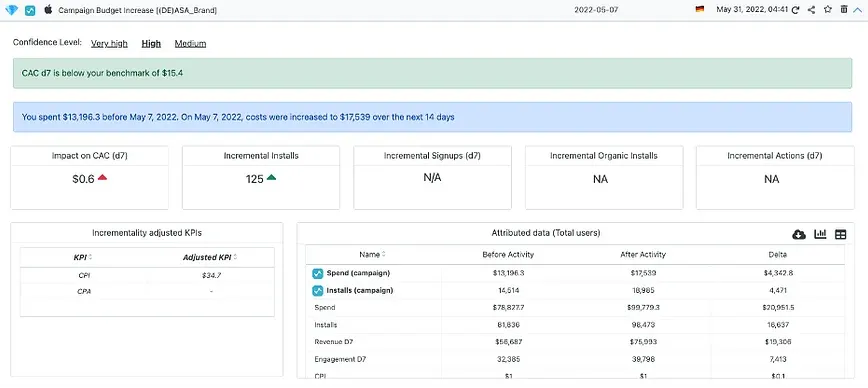

Looking at this next example – one of the only platforms that was not impacted at all when it came to user-level tracking was (no surprise) – Apple Search Ads.

Here, we see a measurement of a budget increase from $13,196 to $17,539, where user-level tracking showed a whopping 4,471 installs (CPI of $0.97)

However, incrementality analysis showed that this budget increase only yielded 125 incremental installs – making the adjusted (incremental) CPI for this increase: $34.7!

Lowering the ad spend drastically revealed that this campaign was in-fact not generating incremental installs, but was mostly cannibalizing conversions that the customer would have been getting already.

If you want to learn more about causal measurement without the need to run any experiments, feel free to schedule a demo with us and we would love to show you our methodology for measurement.

INCRMNTAL is an incrementality measurement platform, providing marketers with a 360 measurement tool for all of their marketing activities. The platform does not require any user-level data, and operates in an always-on setup without any need for experiments or holdouts.

Maor is the CEO & Co-Founder at INCRMNTAL. With over 20 years of experience in the adtech and marketing technology space, Maor is well known as a thought leader in the areas of marketing measurement. Previously acting as Managing Director International at inneractive (acquired by Fyber), and as CEO at Applift (acquired by MGI/Verve Group)