Platform

Use Cases

Many Possibilities. One Platform.

AI and Automation

The Always-on Incrementality Platform

Solutions

Teams

Built for your whole team.

Industries

Trusted by all verticals.

Mediums

Measure any type of ad spend

Use Cases

Many Possibilities. One Platform.

AI and Automation

The Always-on Incrementality Platform

Teams

Built for your whole team.

Industries

Trusted by all verticals.

Mediums

Measure any type of ad spend

Nobody wakes up one morning and decides to question everything they know about marketing measurement. Well, at least not sane people.

The idea “let’s measure the true incremental value of our ad spend” doesn’t just happen in a planning meeting. It doesn't happen at a conference. It doesn't happen because someone read a whitepaper and had an epiphany. As much as we (at I-N-C-R-M-N-T-A-L) would love for this to be the case.

While we wish there was a month or even just a day for it - Incrementality awareness happens by accident.

Here are some of the top scenarios companies shared with us when asked: “what caused you to want to measure incrementality?”:

Here's a story every growth marketer has lived at least once - even if they didn't realize what they were witnessing at the time.

It's the last week of the month. The media budget is exhausted. Google campaigns pause. TikTok goes dark. The performance dashboard looks like a ghost town.

And then... nothing changes.

Sales keep coming in. Installs keep rolling. The conversions that were supposedly being driven by all that spend? They're still happening. Without the spend.

Most people rationalize this. "Organic momentum." "Word of mouth." "Seasonal lift." They top up the budget the following month, the attributed numbers go back up, and the whole machine keeps spinning. Nobody asks the obvious question: wait - were we actually doing anything?

Some people do ask. Those people have discovered they have an incrementality problem.

Same story, different format.

An advertiser scales aggressively. Budgets go up across all channels. Attribution dashboards light up green - ROAS looks great, CPI is within target, everything is humming. Then a billing issue freezes the card. Campaigns pause involuntarily for 72 hours.

Organics spike.

Not because of some mysterious force. Because a significant chunk of what was being "attributed" to paid media was actually cannibalization of users who were going to convert anyway. The paid campaigns weren't acquiring new customers. They were putting a toll booth on your existing traffic - and charging you to drive through it.

A version of this is exactly what happened to eBay, Uber, Airbnb, and countless others. All eventually discovered that a significant % of their ad spend was redundant. Not underperforming. Redundant. They were paying millions to give away credit for customers who were already on their way.

They didn't figure this out from a dashboard. They figured it out when someone, somewhere, had the courage (or sometimes – the naivety) to turn something off.

Because we built an entire industry on a broken ruler - and then spent two decades optimizing with it.

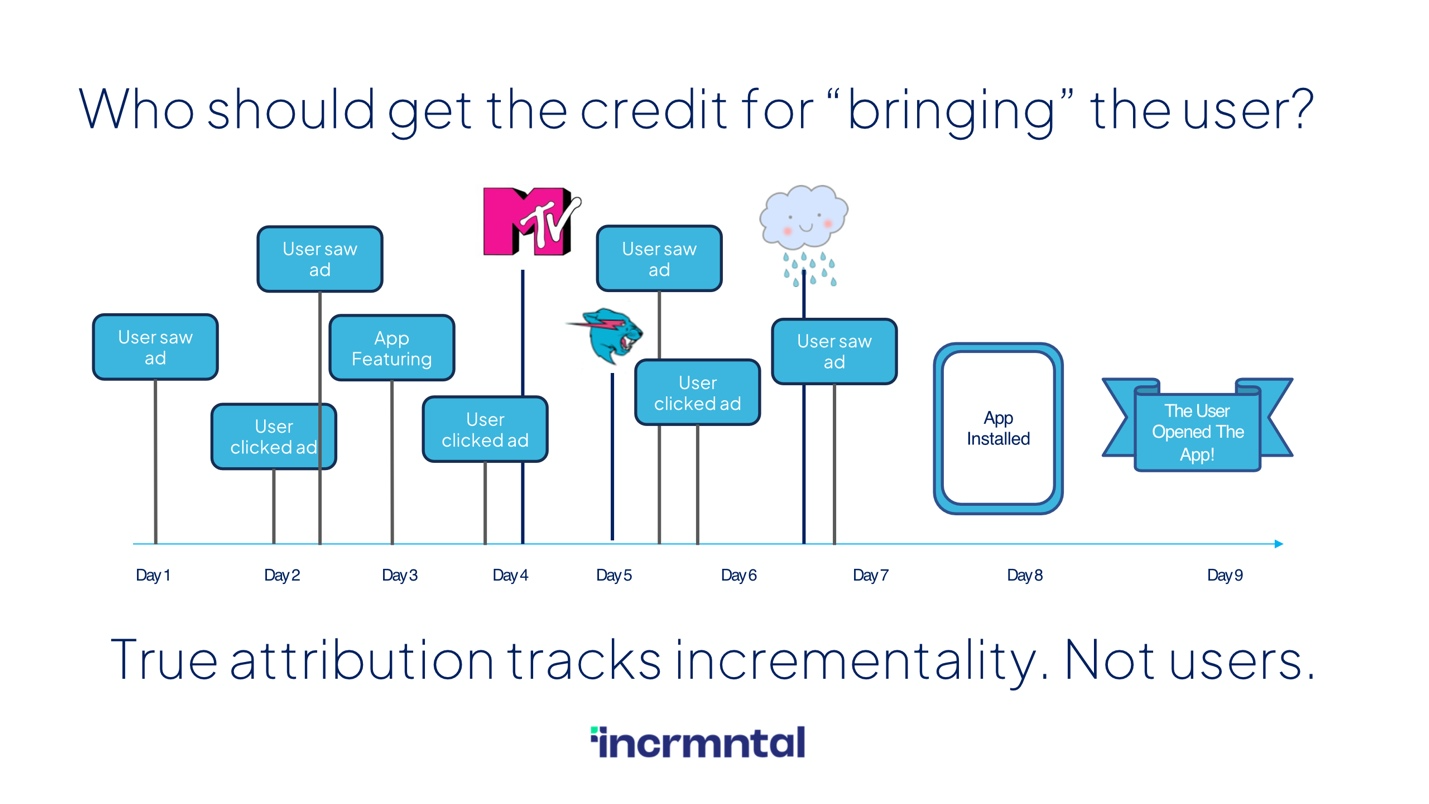

The original promise of attribution was seductive: "everything is trackable." A user clicks an ad, downloads an app, makes a purchase - and a postback fires, credit is assigned, and somewhere in an MMP dashboard a number goes up. Clean. Deterministic. Apparently scientific.

The problem is what attribution actually measures: the last thing that happened before a conversion. Not the cause of the conversion. There's a massive difference between those two things, and we've been conflating them for 20 years.

Attribution asks: which ad got the credit?

That's the wrong question entirely.

The right question - the one almost nobody was asking - is: would this conversion have happened anyway, without the ad?

That's incrementality.

It gets worse. Because attribution isn't just passively broken - it's actively gamed.

Media vendors are incentivized to show performance. Their optimization algorithms target users most likely to convert. And the users most likely to convert? They're the ones who were probably going to convert organically anyway. So the vendor serves them an ad, the user converts, the postback fires, and the vendor gets credit.

Think of it like a random sales clerk placing a sticker on your item right before you pay at the register - to claim their commission. You already had the item. You were already walking to the checkout. But they get paid anyway.

And - here's the part that stings - you're the one paying the commission.

This isn't a minor rounding error. It's a structural feature of how last-click attribution works, and it means the entire optimization feedback loop most marketers rely on is partially built on false signal.

Because when everything looks fine on paper, nobody looks under the hood.

Attribution dashboards are designed to show performance. They are not designed to show when something does NOT perform. The system produces a story where performance is always happening - and every dollar you spend is justified by the number that follows it.

The only time this narrative breaks down is when something external forces a disruption. Budget runs out. Card gets declined, someone makes a fortunate mistake. Suddenly the attribution model's "correlation" is exposed as what it always was: credit assignment, not causality.

Most marketers file these moments away as anomalies. A few stop and think about what they actually mean.

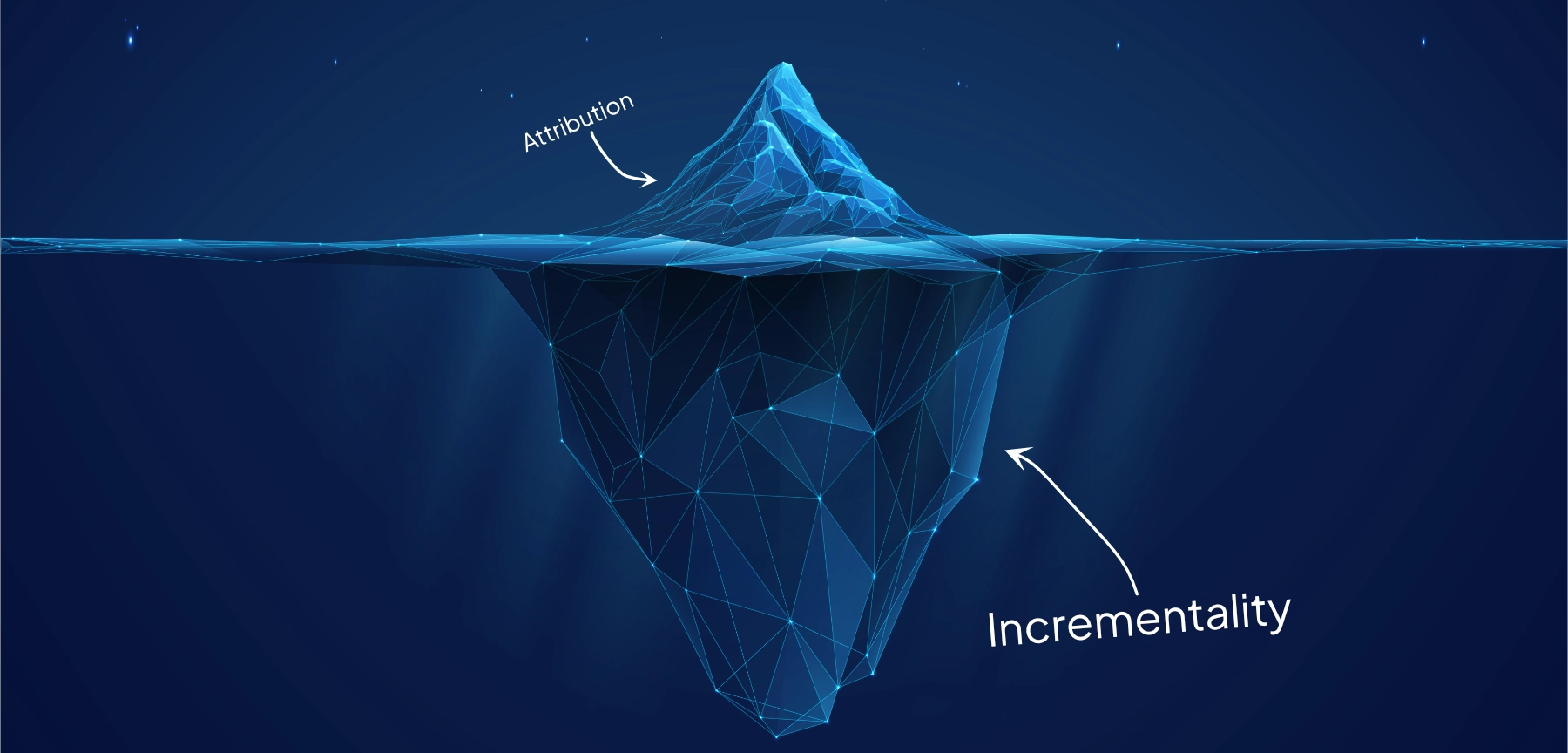

Incrementality isn't a replacement for attribution. It's answers different question.

Attribution tells you which channel got the last touch. Incrementality tells you whether that touch actually caused anything. It's the counterfactual - the parallel universe where the campaign didn't run, and you can observe what truly changed because of your marketing.

Done right, it tells you things that attribution never can: that your Google branded search campaigns are mostly capturing people who would have found you anyway. That your retargeting campaigns have a 60% cannibalization rate. That the new channel you launched last month has zero incremental lift - despite a ROAS that looks perfectly healthy.

These aren't exotic insights reserved for companies with PhDs on staff. They're the basic facts of your business. You're just not seeing them - because the ruler you're using is broken.

You don't need to run out of budget to figure this out.

The moments that reveal the measurement problem - paused campaigns, budget freezes, organic spikes when paid goes dark - are accidents. But what they reveal isn't accidental at all. It's always been there. The signal exists in your data right now, if you know how to look for it.

Plotting your paid and organic results side by side when you launch a new vendor. Watching what happens to total conversion volume - not just attributed volume - when costs go up. Checking whether your growth at the end of the month, when budgets run out, looks any different from growth at the beginning.

These are not sophisticated tests.

They're basic sense checks.

And for most advertisers, they may produce uncomfortable answers. They may produce the truth.

Jack Nicholson was wrong about a lot of things. But he was onto something when he said "you can't handle the truth." Because most marketing teams, staring at green dashboards and healthy ROAS numbers, genuinely can't. Not because they're incompetent - because the system is designed to never show them one.

The question isn't whether the truth is out there. It's whether you're willing to look for it before the budget runs out and forces your hand.

At INCRMNTAL, we built our platform specifically for marketers who've had that moment - or who want to find it before the budget runs out. Always-on incrementality measurement, no experiments required.