Platform

Use Cases

Many Possibilities. One Platform.

AI and Automation

The Always-on Incrementality Platform

Solutions

Teams

Built for your whole team.

Industries

Trusted by all verticals.

Mediums

Measure any type of ad spend

Use Cases

Many Possibilities. One Platform.

AI and Automation

The Always-on Incrementality Platform

Teams

Built for your whole team.

Industries

Trusted by all verticals.

Mediums

Measure any type of ad spend

I've been writing about advertising on LLMs since before there was anything to advertise on. The Zero Click to $100bn piece. The Ads on ChatGPT piece. The full LLM Advertising resource. If you've been following along, you know my position: advertising is coming to LLMs whether the industry is ready or not, and the measurement problem is going to be worse than anything we've seen in mobile.

So when actual results started landing in the INCRMNTAL platform, I had two reactions.

First: FINALLY!

Second: oh god, what if I was wrong?

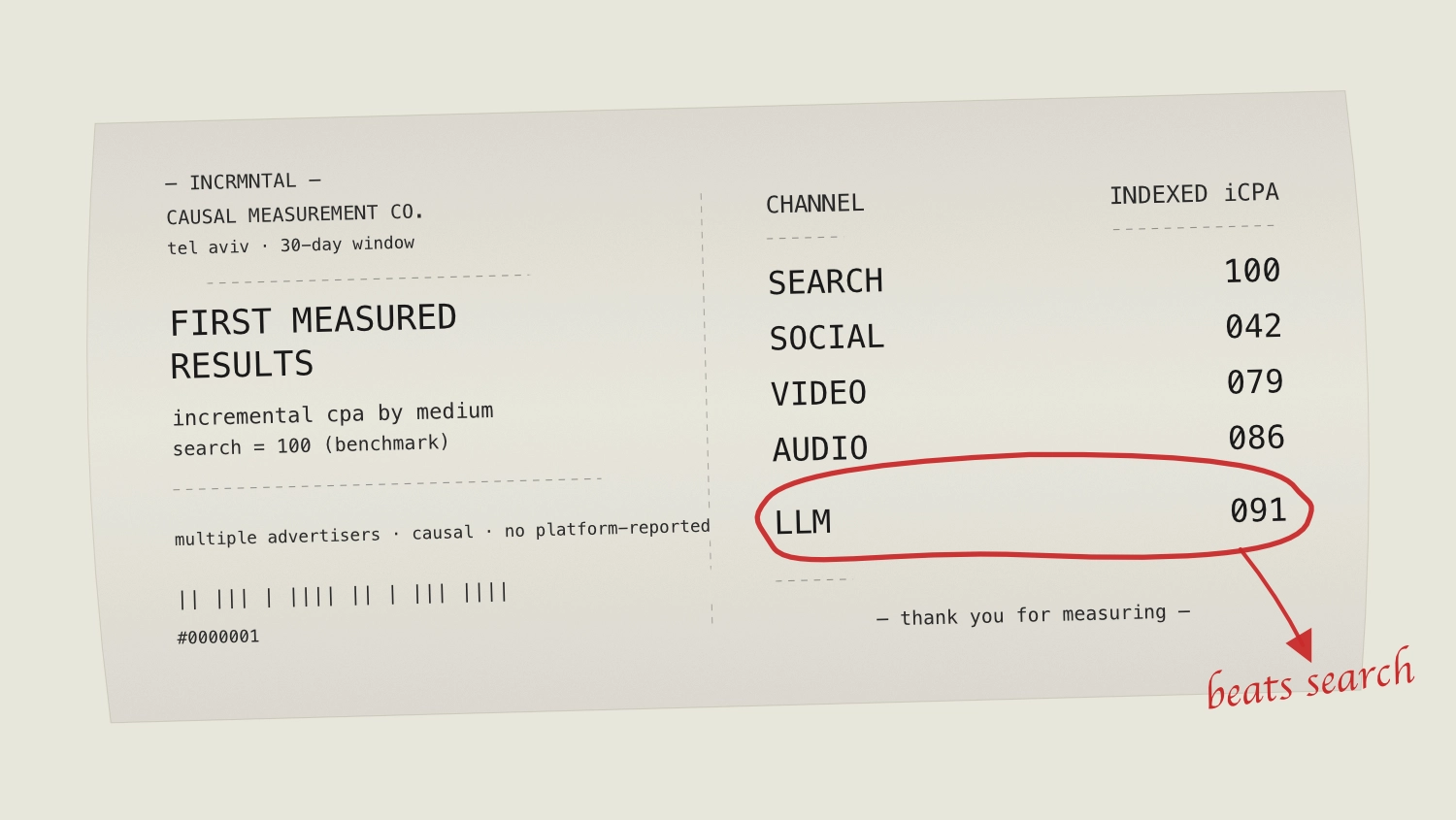

Today, I’m very glad to share with you what 30 days of incremental measurement of campaigns running on OpenAI across multiple Advertisers actually shows.

Incremental CPA on LLM channels is competitive with Search. Not directionally close. Not "promising for the future." Competitive right now.

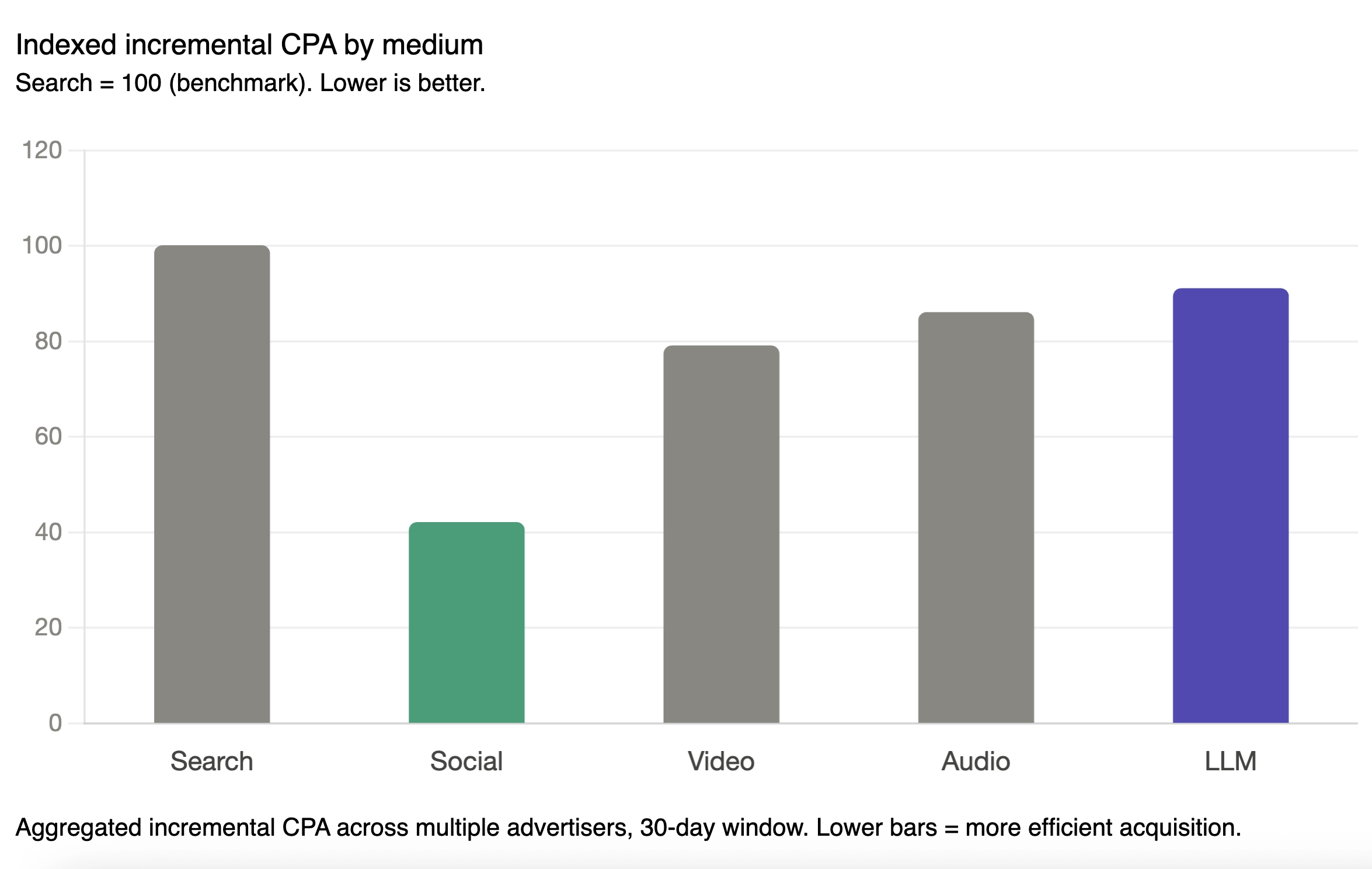

Search doesn’t only sit with the benchmark – given the size (Search represents 34% of the total ad spend we see at INCRMNTAL) – Search IS the benchmark. Social leads the incremental performance chart, coming in at an index of 42, in line with the incrementality vs. attribution benchmarking report we’ve recently published. LLM (or to be blunt: OpenAI Ads) sit at 91.

LLM beats Search on incremental CPA.

Read that again, because I had to. The medium with the highest attributed cost-per-conversion in the entire dataset - $142.68 versus Search's $38.76, roughly 3.7x more expensive at the top of the funnel - ends up cheaper on a incremental cost-per-action basis than the channel that has defined paid acquisition for two decades.

That's not a rounding error. That's a different funnel shape.

Every time I write about LLM ads, somebody in the comments tells me the unit economics don't work. "Have you seen what OpenAI is going to charge?" "There's no way the CPMs make sense." And on the early conversion side (lead, install), they're right. $142 to acquire a user looks insane next to $38 from Google.

But CPI was always a vanity metric. The question that pays the bills is: of the people who signed up, how many actually did the thing you needed them to do? On that question, LLM users convert at rates that make the CPM premiums disappear.

The intuition is simple once you say it out loud. Someone who signs up to your service, or installs your app after asking ChatGPT a detailed question about the problem your product solves is qualitatively different from someone who registers or installs because the creative interrupted their feed. The LLM user already wrote the brief. They came pre-qualified.

This isn't a hypothesis anymore. It's in the data.

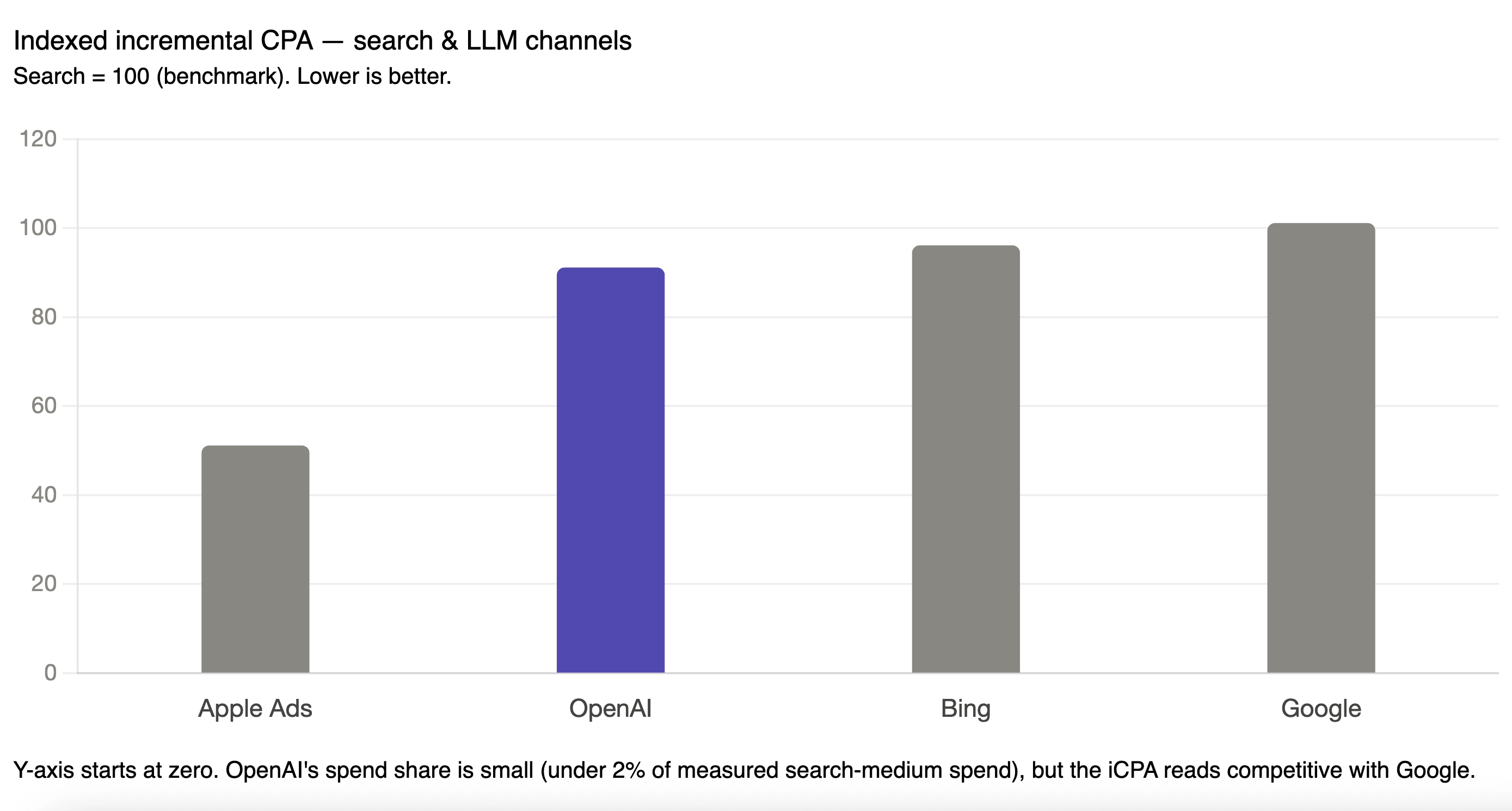

Inside the search medium itself, the channel breakdown gets more interesting.

Google indexes at 101. Bing at 96. OpenAI at 91. Apple Ads at 51, which is its own conversation.

OpenAI is beating Google on incremental CPA. By ten points. In a 30-day window. Across multiple Advertisers.

Now - and I want to be very direct about this because it's the question I'd ask if someone else were presenting these numbers - the OpenAI sample is tiny. We're talking about $1M of measured spend versus Google's $27M spend (last 30 days, same advertisers). That's a +27 gap. Anything you read into the OpenAI number has to come with a caveat: this is early-adopter spend, on a channel that may select for a particular kind of audience, at a point in the platform's commercial life where everything is unusual.

But "directional with a small sample" is still directional. And the direction is: when you pay 4x more per install on OpenAI, you get users who convert efficiently enough that your incremental cost per action lands below what Google delivers.

If that holds as OpenAI's ad business scales - and there's no obvious mechanical reason it shouldn’t - the implication is that Google's search dominance may have some real competition.

Apple Ads shows up at 51 on indexed iCPA, which would make it the best-performing channel in the entire dataset. I'm not going to celebrate that number, and you shouldn't either.

Apple Search Ads is presented to the user at the exact moment they're searching for an app, within the app store The intent is so real that incrementality will often show incredible results (which they are). I’ve covered brand keyword incrementality previously – and showed that those are a) incremental b) very low costs c) difficult to scale.

The same caution does not apply to OpenAI. ChatGPT isn't an app store. The user asking ChatGPT for a recommendation is genuinely upstream of the install decision in a way that Apple Search Ads users typically aren't.

I've been arguing for two years that LLM advertising would matter. I was prepared for the first data to show it would matter eventually - in 2027, 2028, when the platforms got their act together.

Instead, the first data shows it matters now. Specifically, it matters on the metric that actually drive business forward.

If you're allocating paid budget in 2026 and you don't yet have a line item for LLM channels, you're not being conservative - You're being late.

And if your reservation has been the lack of ability to measure this Zero-Click medium – we invite you to get a demo of the only measurement platform that measures results even in a zero-click world.